Analog? Digital? Both? In professional audio, many choices exist, but there’s not enough time to make the wrong ones. We regularly hear claims floating about, often skewed by particular opinions and interests that tend to color underlying simple truths.

The Merriam-Webster Dictionary defines the noun “analog” as being something that is analogous (similar or related) to something else. For example, an analog can be a food product that represents another, such as inexpensive whitefish “krab” intended to replicate more expensive (real) crab meat, or, for you vegetarians, soybeans processed to look and taste like beef.

If you’ve experienced either of these examples, you know that some products are more successful than others in recreating the essence of the original. The audio world is really no different.

But a fundamental difference between processed food and audio, of course, is that a foodstuff analog can only ever be exactly that – analog. In contrast, audio can be reproduced as an analog OR as a digital representation of the original.

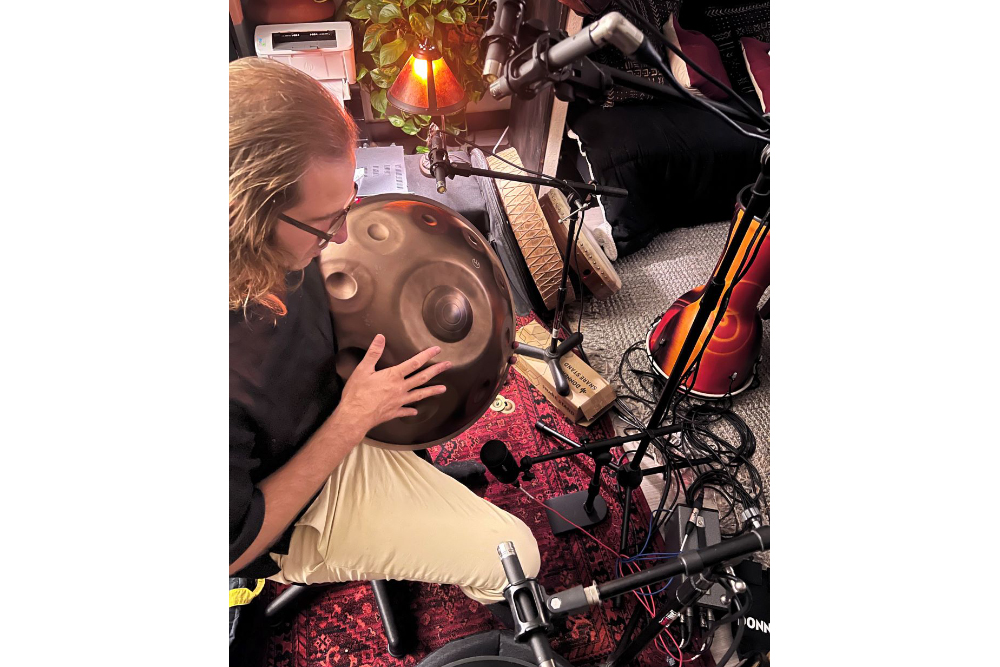

When something makes a sound, such as a musical instrument or a human voice, the vibrations produced travel through the air as an analog of that sound. So, we start with an analog of the original, a close representation of the sound source.

If we’re able to accurately preserve the subtleties and nuances of the original movement of the air throughout the audio system, then we have done our job. But how best to do that?

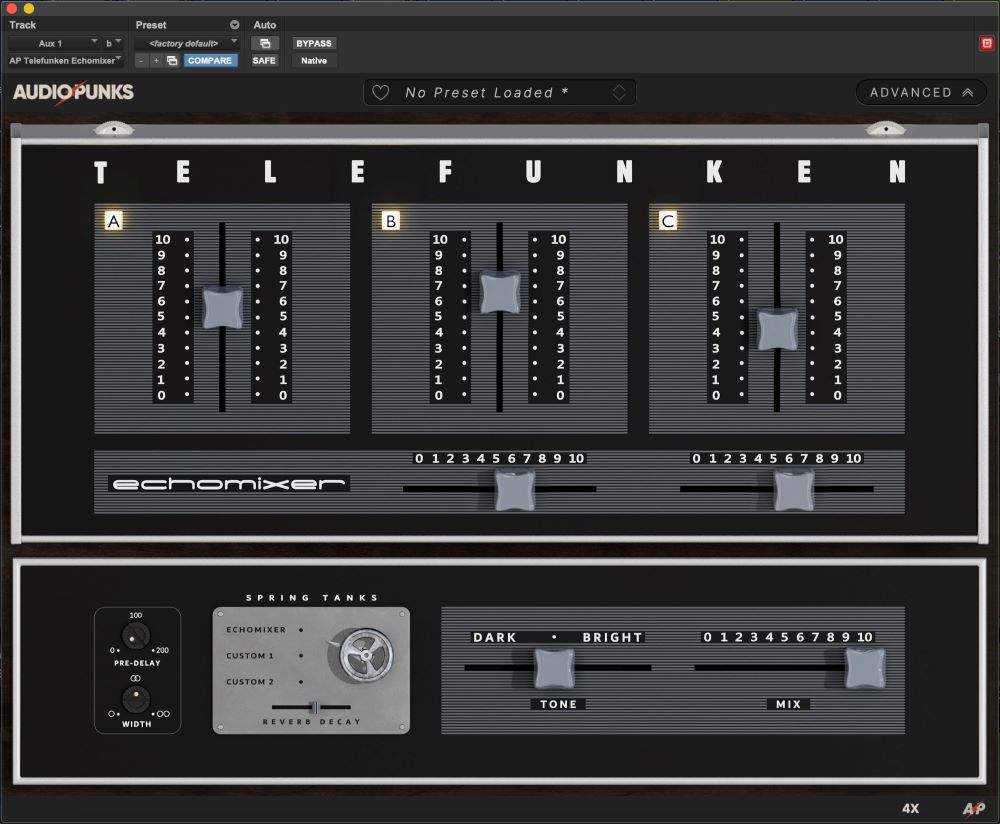

It isn’t just a question of whether it’s better to put together an entirely analog or entirely digital signal path to capture and convey the original sound source.

Both methods offer advantages and disadvantages, and a variety of factors, including circuit design and component choice, can affect how accurately the equipment reproduces the source.

But for all the advances that have brought high-resolution digital audio products to the marketplace, the debate still rages over which sounds “better.” The crux of the matter is how close current digital audio technology, even at high sampling rates and bit depths, can come in replicating full-bandwidth analog audio gear.

Audio—sounds that are within the average human range of hearing generally accepted to cover the frequency range of 20 Hz to 20 kHz – moves through the natural world as an analog signal that is continuous in time and amplitude.

In a digital audio system, a natural sound traveling through the air must be converted after being captured by a microphone.

An analog microphone translates the movements of air on its diaphragm into an electrical signal. That electrical signal must then be converted into a digital signal, a string of zeroes and ones, in order to be transported by, operated on, or stored by the digital audio system that follows.

This is achieved through an analog-to-digital converter, utilizing sampling and quantization.

How You Slice It

Sampling and quantization is like looking at the speedometer of your car. If you don’t keep a regular eye on your speed, your car might be going faster or slower than you realize.

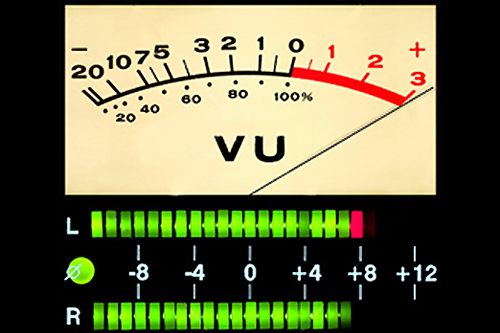

Audio sampling is simply taking regular measurements of a varying analog voltage or current. Because the audio voltage or current is constantly changing, we have to pick moments in time to freeze the audio as a non-varying number.

We must make measurements in a quick enough succession that we don’t miss important changes between measurements. And we must measure with enough resolution that we capture as much detail as we desire.

Theory tells us that the rate at which the signal is sampled must be at least twice that of the highest frequency that we wish to reproduce. The Nyquist Theorum, therefore, means that, to faithfully capture an analog audio signal that extends to the accepted upper threshold of 20 kHz, it must be sampled at 40 kHz, or 40,000 samples per second.

As an aside, the reason that the compact disc Red Book standard dictates a sampling frequency of 44.1 kHz is based on the early developers, Philips and Sony, wishing to cover the generally accepted audio spectrum of human hearing while also fitting the resulting digital information onto videotape.

By fitting three samples into each active line in the video field, at 50 Hz or 60 Hz, the developers were able to sample 44,100 times per second and save the data onto videotape, which was the digital audio storage and mastering precursor to the compact disc.

These days, we understand that the higher the sample rate, the better. Extending the sampling frequency well beyond the minimum 40 kHz allows digital processing tools to operate on the signal without compromise and to reduce alias signals.

Alias signals are basically components of the audio signal above the upper limit of the sampling frequency that are essentially folded back into the signal, creating an unpleasant distortion.

Someone once gave a good example of aliasing. A guy living in a cave was waiting for daylight. He stuck his head outside about every 25 hours. Starting at 8 o’clock at night (8 pm), he next looked outside 25 hours later when, unbeknownst to him, it was 9 pm and still dark.

Looking outside his pitch black cave every 25 hours he encountered night 10 times in a row, leading him to believe that the night was 10 times longer than it really was.

That is what aliasing is all about. It’s a false reality, created by not sampling the signal of interest frequently enough. If the knucklehead had looked every hour he would have seen the true length of night.

The same goes for audio sampling. If we don’t make enough measurements within a period of time, we miss important audio information and end up with incorrect sounds, harmonically unrelated to what we really wanted to capture.