The realm of the audio engineer is a broad area encompassing recording, radio, theatre, film, sports, television, live music and public speaking, and while all of these disciplines require a similar core skill set, they all differ in the way that these skills are applied.

The common ground is that they all involve the capture, manipulation and transmission of sound, which leads us to believe that it’s relatively easy to switch from one role to another. But all of these roles are better defined by the way in which they differ from each other – not just in the application of skills but also in the environment in which they’re discharged and the time scale in which they unfold. These key differences explain why certain people are better suited to certain roles than others.

The two areas most are drawn to directly involve music, i.e., the recording studio and live shows. Studio recording engineer and front of house live sound engineer are probably the two most popular and sought-after roles. Many engineers dabble in both during their working lives, treating them interchangeably, but while there’s a fair amount of overlap, they’re fundamentally different roles.

Eye-Opening Experience

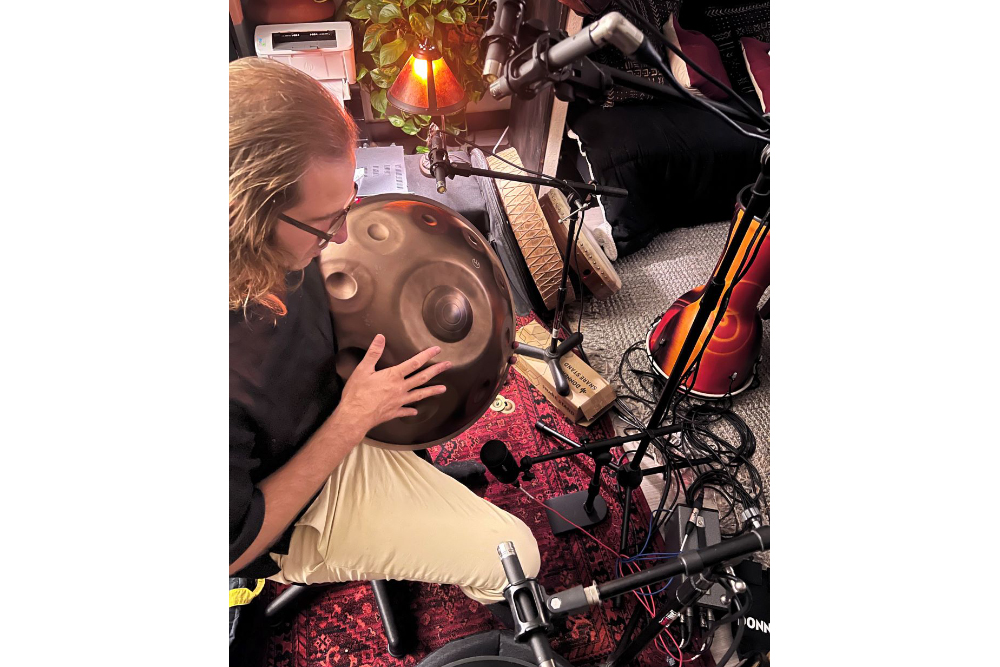

I started my engineering career in recording studios, following the traditional route of “tea boy” to tape op to engineer. At some point a musician friend suggested I mix his band’s gig because I was a sound engineer, I knew their music, and they didn’t fully trust the house engineer. So I went along to the gig confident that I could mix a live show based on my experience in the studio.

However, I soon realized that the only thing that my experience had prepared me for was how to operate a mixing desk. I certainly wasn’t aware of how little time I had to build the mix, and then I realized that there was a lot of sound coming from the stage before I even raised a fader. As if that wasn’t enough to take in, the room was highly reverberant, making it hard to judge where the ambient sound stopped and my mix began.

Fortunately I was also a musician who’d played in a few bands so I knew the basics of how a gig should work and what was required of the engineer – but that didn’t prepare me for being the one in control. It’s easy to identify the problems in retrospect, but at the time I was just staring at the desk hoping to make it through the next half-hour without too many blasts of feedback. The fact that I managed to pull together a half-decent mix probably had more to do with the system being set up well and the input of the house engineer (who probably would have done a much better job than I).

Despite this “baptism by fire,” I would return to live sound time and time again, relishing the challenge. Once I got the hang of it I started to enjoy it greatly and eventually shifted my attention away from the studio and to the arena of live sound, where I’ve happily been working for many years now.

Learning Lessons

One of the biggest differences between live and studio sound is the time frame. A gig has a very finite and linear time frame; everything must happen between the time you can get to the venue and the time you need to get out, without fail.

This puts significant pressure on all aspects of the production. While there are time constraints in the studio, typically dictated by the budget, the ultimate aim of producing high quality and meaningful recordings are more likely to dictate how much time is available – if you need more time and can justify that to whoever is paying the bill, then you’ll get more time. Also, this isn’t to say it’s a pressure-free environment. The requirement of capturing the lightning of a brilliant performance puts pressure on the artists, which filters down to everyone involved.

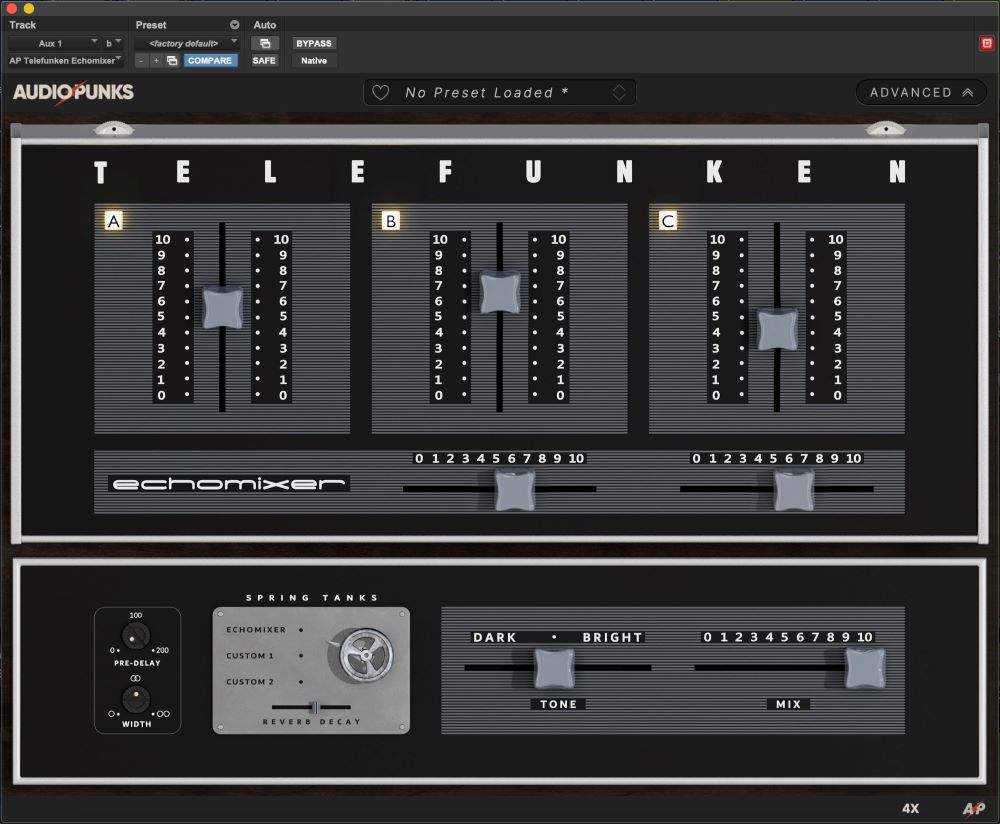

Another key difference is in the channel processing we apply.

The differences can be subtle but important. Starting out in small venues, I soon realized that using large amounts of additive EQ led to feedback issues, especially on vocals – if you boost certain frequencies to get the sound you want, then those frequencies are much more likely to feed back.

So I quickly adopted subtractive EQ practices and soon found that I could invariably get the sound I wanted by taking away the parts of the spectrum that weren’t needed while avoiding the increased risk of feedback.

Live sound also taught me to use compressors in a much more subtle manner. Compression makes the loud parts quieter, but it also makes the quiet parts louder and some of those quiet parts are going to be the frequencies that are on the edge of feeding back.

I often deploy compressors as soft limiters to prevent certain parts of the performance from jumping out of the mix but also as dynamic equalizers to prevent certain frequencies from altering the nature of a sound in an unwanted way (i.e., to prevent an acoustic guitarist from sounding harsh when it’s played harder).

The way a performance is addressed in the studio in terms of channel and dynamics processing differs dramatically due to the simple fact that the performance is a known quantity. Once recorded, we can review it and familiarize ourselves with the full range of the dynamic and spectral content before applying any processing. This enables us to tailor our gates, compressors and EQ precisely to the performance.

In live sound, on the other hand, we need to have the processing in place before the actual performance even happens, and we need to set it up in such a way that it can handle all eventualities. Obviously, sound check enables us to achieve this without bothering the audience, but it’s quite common for musicians to play or sing louder come show time due to the excitement and adrenaline of the moment. which can quite radically alter the timbre and volume of the instruments and/or voices.

Ambience & Acoustics

If we look back at the history of recording, the advent of higher and higher track counts enabled us to close-mike multiple sources simultaneously, delivering unparalleled freedom to shape the sound of the final mixes. We were able to virtually eliminate the ambient sound of the room in which something was recorded (if desired) and place the final mix, or aspects of it, in completely artificial acoustic spaces using reverb. If something is recorded intelligently, the engineer has endless possibilities for shaping the ambient sound of the finished mix.

In the live world, we use similar close-miking techniques but rarely gain complete control of the overall sound of the mix in the same way; what the audience hears is a combination of the backline, the monitors and the PA. It results in multiple arrival times of the same audio signal at different places in the room which are further exacerbated by the reverberant field of the room itself. This complex interaction of multiple sources and reflections differs greatly depending on the size of the room and the composition of it’s reflective surfaces. And as if that isn’t enough to deal with, the overall sound is radically different when the room is empty (i.e., during sound check) than when it’s full (i.e., show time).

Thankfully it’s quite easy to minimize this issue. We can screen off the drum kit, move the amps off stage, use in-ear-monitors, and delay the PA to sync with the backline, but we never gain complete control of the acoustics in the same way as in the studio. Fortunately, good live engineers know how to exploit the acoustics of a room to benefit the mix. Those multiple arrival times can render a kind of soft focus effect that can flatter the mix (as long as the reflections aren’t overwhelming), and an enclosed space can often really help to glue the mix together.

This becomes quite apparent when you start working outdoor festival shows. In my first proper run of festivals, I noticed that my mix seemed much wider than usual – the individual mix elements were all in their own distinct space and weren’t interacting properly. Then I realized that I’d previously been relying on the walls to meld the mix together so started using reverbs in a different way to re-create a coherent acoustic space in the open air. When returning to indoor shows, I switched back to the previous mixing approach, but as I moved on to larger rooms and arenas, I discovered that I needed to mix more like an outdoor show.

Vice Versa

It drives home one of the fundamental differences between the studio and touring: in the studio the music changes but the environment stays the same, whereas on the road the music stays the same while the environment changes.

This explains why my career focus shifted from the studio to live sound. I have a short attention span, so the repetition and longs days of the recording environment proved to be quite challenging. In getting out in the world, I enjoyed the daily challenge of different consoles and rooms to figure out, all on a tightly constrained “get in, get it done and get out” time scale.

The live mix itself is also a fleeting activity, things happen quickly, and you constantly need to zoom in to the mix to tweak individual elements, zoom out to review the whole, and then re-review from bar to bar, song to song, and set to set.

These are my insights into how these two common roles differ; those of you who have worked “both sides of the aisle” may have others. Being able to switch between them is about employing the appropriate mental skills and adapting to the context in which you’re working.