Audio metering is a key part of practically any sound system, be it live, in the recording studio, radio and TV broadcast, or even consumer audio. The ability to monitor the level of the audio to ensure a smooth signal flow through and between components is something we now take for granted. To find out how metering evolved we need to go back to the tail end of the 19th century when people first started to harness electricity to capture and transmit sound.

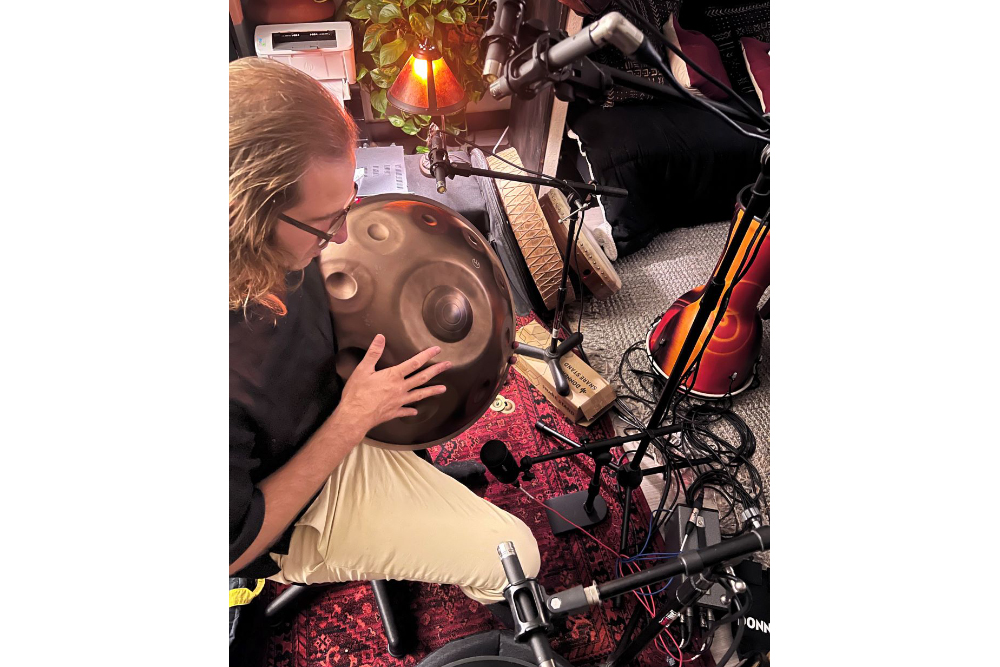

Two of the key components of most audio systems are the microphone and the amplifier, both of which were developed for use in the telephone. The microphone came into existence in the first handsets in 1876, but we had to wait until the invention of the vacuum tube in 1907 before things really started to get interesting.

The telephone industry soon realized the huge potential of long-distance telephony, so one of the first applications for the vacuum tube was in amplifiers called repeaters. These devices were dotted along telephone lines to periodically boost the signal and make up for the loss of level due to the impedance of the cable. To measure this signal loss, the telephone engineers came up with the unit “mile of standard cable” (MSC), where each unit represented the loss of signal over one mile of standard 19 gauge telephone cable at a frequency of 5,000 radians per second (i.e., 795.8 Hz) – the level of which just so happened to correspond to the minimum change detectable by the average listener.

In 1923 a new unit of measurement, the Transmission Unit (TU), was proposed, adopted, and quickly replaced the MSC because it measured signal loss independent of the frequency. This meant it could be used outside of the telephone industry and soon became popular with radio broadcasters. The Bell Telephone Company then introduced the “bel” in 1928, named after company founder Alexander Graham Bell, as a unit of power and soon realized that a unit that was one-tenth of the size was better for practical purposes. Thus the decibel (dB) was born.

Fourth Value

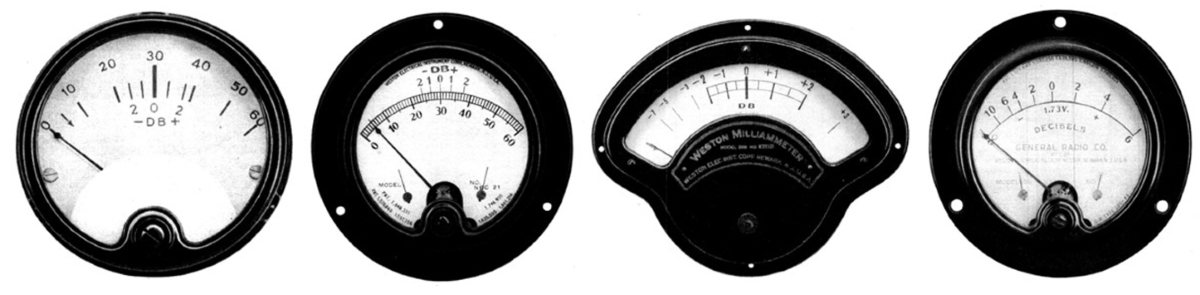

For the first time, we had an internationally recognized unit to represent the level of audio signals, however, there was still no standard for level metering. Various indicators existed but they often measured different characteristics of the signal and displayed them on a range of scales and color schemes the only served to confuse the operators.

The primary problem was that the three common measurements of sinusoidal waves used in electrical engineering – average, root-mean-squared (RMS) and peak – aren’t great at conveying the objective levels of complex and non-periodic waveforms, such as those employed by speech and music.

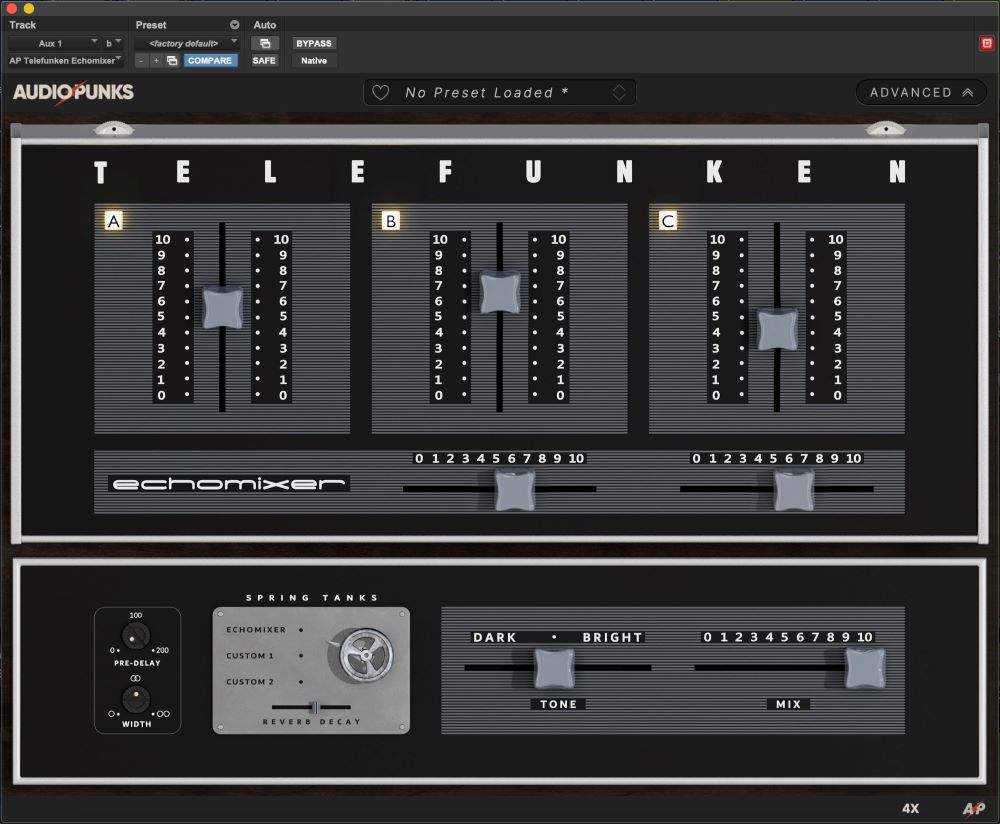

In 1938, engineers at CBS, NBC and Bell Laboratories got together and developed a new standard reference level indicator for audio signals. It involved conceiving a fourth value that they named “volume” – a value that was better suited to conveying the magnitude of complex signals. It was defined as a purely empirical concept, evolved to meet a practical need not definable by a precise mathematical formula in terms of the familiar electrical units of power, voltage or current. This new standard volume indicator displayed a percentage scale as well as employing “volume units” which is how it became known as the VU meter.

The VU meter employs a DC galvanometer (i.e. a moving coil ammeter) with a full wave copper oxide rectifier mounted within its case. The first VU meters were larger than their predecessors at 4.5 inches square and featured an increased size scale that displayed the percentage on top in black and the VU values in red underneath, and it also included a red overload area. All of this was set against a cream background to make it easier to read.

To ensure consistency from unit to unit, strict specifications were developed, and regular calibration was encouraged. The calibration of 0 VU was achieved by connecting a 600-ohm load, through which flowed 1 milliwatt of sine wave power at 1,000 Hz. To help guarantee a consistent frequency response, that level reading was not allowed to differ by more than 0.2 dB between 35 Hz to 10 kHz and 0.5 dB between 25 Hz to 35 Hz and 10 kHz to 16 kHz. Dynamic characteristics were also defined insofar as the needle should reach 99 within 300 milliseconds of applying the signal and should then overshoot the 100 point by at least 1 and not more than 1.5 percent.

The electro-mechanical nature of the VU meter, most notably the mass of the needle, was a key component in intentionally slowing down the measurement, which effectively averaged out the peaks and thus better reflected the perceived loudness of the signal rather than the electrical precision of its peak values. The clever thing about the VU meter is that rather than enabling accurate measurement of specific values (which isn’t always necessary), it more quickly allows the operator to check that the levels are within the desired range. It’s a tribute to those early pioneering electrical engineers that the VU meter is still in use today, almost 80 years later.