Although discussions of digital audio conversion have filled several books, a fundamental understanding of two terms is particularly important to correctly using your computer-based recording system: sample rate and bit depth.

The conversion process is complex, and there are multiple ways to accomplish it. But no worries: We’re just going to discuss sample rate and bit depth at a basic level, as applied to linear pulse-code modulation (PCM), one of the most common conversion technologies.

Sampling Basics

At the most basic level, computers operate one step at a time by turning a succession of switches on or off at very high speed. Since computers “think” in discrete steps, in order to convert analog audio signals to the digital domain, it’s necessary to describe the continuous analog waveform mathematically as a succession of discrete amplitude values.

In an analog-to-digital converter, this is accomplished by capturing, at a fixed rate, a rapid series of short “snapshots”—samples —of a specified size. Each audio sample contains data that provides the information necessary to accurately reproduce the original analog waveform. Things like dynamic range, frequency content, and so on are all contained within this datastream. The instantaneous amplitude level in each sample is given the value of the nearest measuring increment—a process called quantization. By reproducing these values and playing them back in the same order and at the same rate at which they were captured, a digital-to-analog converter produces a practically identical (in theory) copy of the original waveform.

The rate of capture and playback is called the sample rate. The sample size—more accurately, the number of bits used to describe each sample—is called the bit depth or word length. The number of bits transmitted per second is the bit rate. Let’s take a look at this as it applies to digital audio.

Digging A Bit Deeper

The on/off status of each switch in a computer is represented as 1 or 0, a system known as binary. Thus, a string of binary digits—bits —is used to describe anything a computer does, including manipulating and displaying text, images, and audio. Computers can manage entire strings of these bits at a time; a group of 8 bits is known as a byte; one or more bytes compose a digital word. Sixteen bits (two bytes) means that there are 16 digits in a word, each of them a 1 or 0; 24 bits (three bytes) means that there are 24 binary digits per word; and so on.

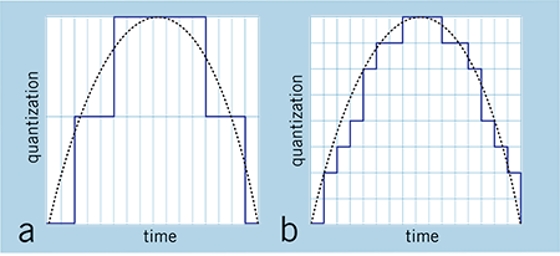

The number of bits in a word determines how precise the values are. Working with a higher bit depth is like measuring with a ruler that has finer increments: you get a more precise measurement. When the values are in finer increments, the converter doesn’t have to quantize as much to get to the nearest measuring increment.

FIG.1: If the bit depth is low (a), the signal will be inaccurately converted because it’s sampled in large increments. By increasing the bit depth (b), you get finer increments and a more accurate representation of the signal.

Thus, a higher bit depth enables the system to accurately record and reproduce more subtle fluctuations in the waveform (see Fig. 1). The higher the bit depth, the more data will be captured to more accurately re-create the sound. If the bit depth is too low, information will be lost, and the reproduced sample will be degraded. For perspective, each sample recorded at 16-bit resolution can contain any one of 65,536 unique values (2^16). With 24- bit resolution, you get 16,777,216 unique values (2^24)—a huge difference!